Look up at the summer night sky. You see stars, of course. There are bright stars like Vega and Arcturus, middling stars like Polaris (the North Star) and Mizar (the star in the “bend” of the Big Dipper’s handle), and faint stars like Rho Leo (an unremarkable star in the constellation of Leo, the lion) and Alcor (the dim companion of Mizar). When we look at these stars, what are we seeing? How do we know what the stars are? You have probably heard that the sun is a star, but are all these stars suns?

I have previously discussed in this blog how a person with excellent vision will see stars as small, round dots of differing apparent sizes, or magnitudes. Magnitude means bigness, as this old discussion of the scale of magnitudes indicates:

The fixed Stars appear to be of different Bignesses…. Hence arise the Distribution of Stars, according to their Order and Dignity, into Classes; the first Class… are called Stars of the first Magnitude; those that are next to them, are Stars of the second Magnitude… and so forth, ‘till we come to the Stars of the sixth Magnitude, which comprehend the smallest Stars that can be discerned with the bare Eye….

The idea of magnitude as bigness also shows clearly in this old discussion of the changing magnitude of a “new star” or “nova”:

…from the sixteenth to the twenty-seventh of the same month… it changed bigness several times, it was sometimes larger than the biggest of those two stars, sometimes smaller than the least of them, and sometimes of a middle size between them. On the twenty-eighth of the same month it was become as large as the star in the beak of the Swan, and it appeared larger from the thirtieth of April to the sixth of May. On the fifteenth it was grown smaller; on the sixteenth it was of a middle size between the two, and from this time it continually diminished till the seventeenth of August, when it was scarce visible to the naked eye.

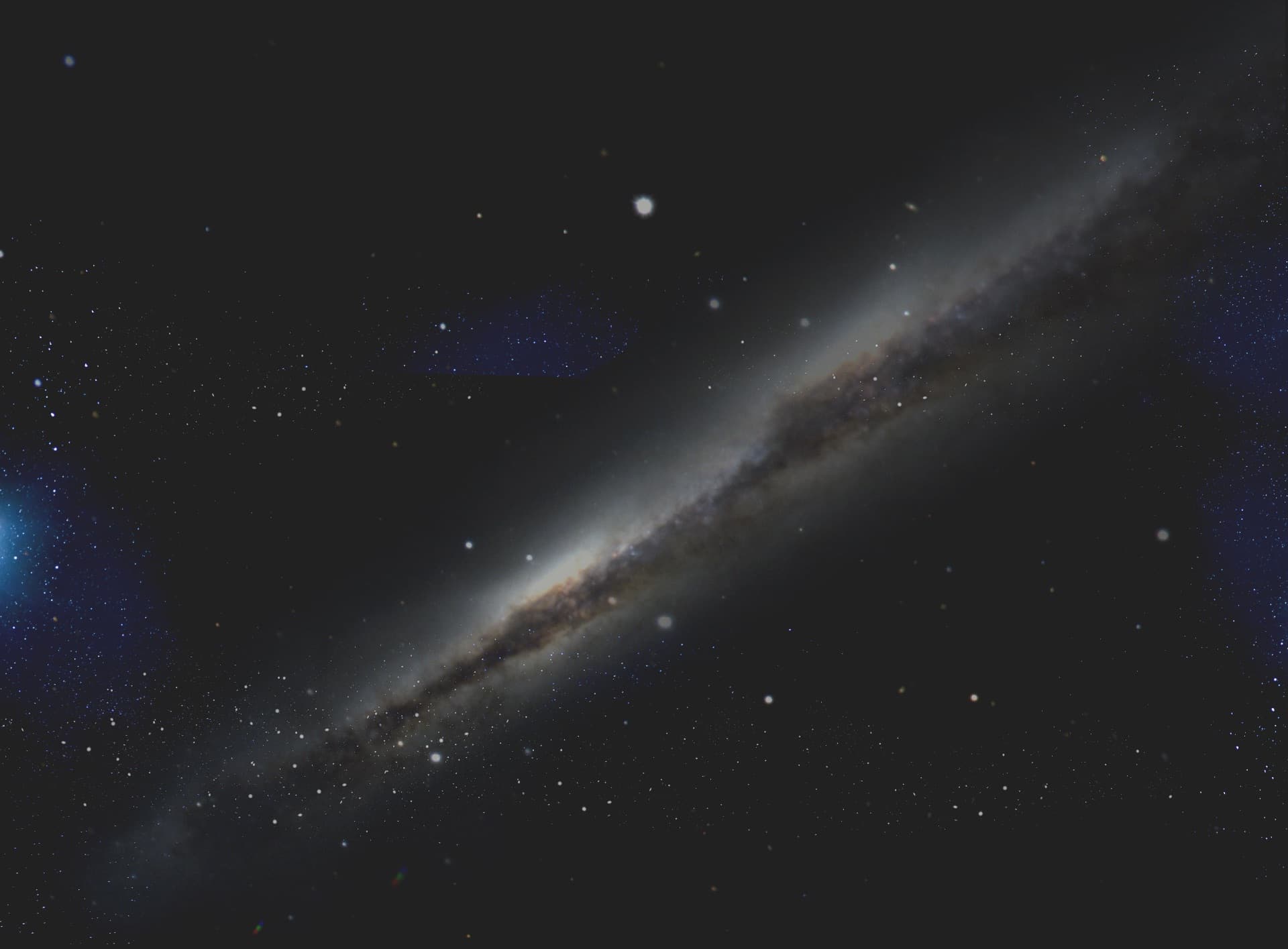

More stars of the summer sky. Note how Stellarium represents the stars as dots of differing sizes. The web page for Stellarium states that Stellarium “shows a realistic sky… just like what you see with the naked eye….”

More stars of the summer sky. Note how Stellarium represents the stars as dots of differing sizes. The web page for Stellarium states that Stellarium “shows a realistic sky… just like what you see with the naked eye….”This system of classifying stars by their apparent sizes dates back to the ancient Greek astronomer Hipparchus, nearly two centuries before Christ. However, following the development of the telescope in the early seventeenth century, astronomers came to realize that the apparent sizes of stars are spurious: Arcturus looks larger than Rho Leo not because of its physical size, but because of its light output and how that light interacts with the eye.

This is perhaps better understood by imagining that one night you and a friend experiment with two identical small LED flashlights, one of which has a weak battery, and the other of which has a very strong battery. Your friend walks two hundred paces directly away from you, then stops, turns on the lights, and aims them both back toward you. The brighter light will appear larger to you than the fainter light, even though both are the same physical size. Try it and see. When it comes to point-like light sources, be they distant flashlights or distant stars, the apparent size you see resides not in the lights but in your eyes.

Thus in the nineteenth century astronomers reworked the magnitude scale so that it wasn’t about size. They came to understand that the light that reaches Earth from a first magnitude star is about 100 times more intense than the light that reaches Earth from a sixth magnitude star. The intensity is measured in power per unit of area—Watts per square centimeter or W/cm2. The magnitude scale is related to light intensity through a factor of 2.5; the light from a (very faint) fifth magnitude star is 2.5 times more intense than the light from a (barely discernable to the eye) sixth magnitude star. A fourth magnitude star (still pretty faint) has 2.5 times the light intensity of that fifth magnitude star and 2.5×2.5=6.25 times the light intensity from the sixth magnitude star. Thus a bright first magnitude star is 2.5×2.5×2.5×2.5×2.5=100* times brighter in terms of intensity than that barely discernable sixth magnitude star.

Why this system? Because the original magnitude scale was based on what the eye sees, and the eye works on a multiplicative scale, not a simple additive one. To see this for yourself, gather up about ten birthday cake candles and turn out all the lights in the room. Look around; it’s dark in the room! Now light one candle. Note how one candle changes things a lot; with one candle you can really see. Now light a second candle, so that two candles are burning. Note how the second candle does make the room brighter, but the change it causes is not as dramatic as the change caused by the first. The third candle causes even less change, the fourth less still, and by the time you go from nine candles to ten you hardly notice the difference. Your eye is not attuned to additive increases in light; each additional candle does not seem to increase the illumination of the room equally, even though each is adding an equal amount of light power. No, your eye is attuned to multiplicative increases in light.

Understanding the multiplicative nature of the magnitude scale makes it easier to determine what we are seeing when we look at the stars, and how we know what the stars are. We will learn more about magnitude next week.

*OK, for those of you who multiplied this out and found out that the result is not 100 but 97.66… well, actually the multiplying factor in the magnitude scale is not exactly 2.5, but 2.5118864…. This number is called “Pogson’s ratio,” after N. R. Pogson, the British astronomer who proposed this system in 1856.